Why Most Physician-Built AI Tools Will Fail (And How to Build the Ones That Won't)

Medical Director, Atlanta Perinatal Associates | Founder, CodeCraftMD

Listen to this post

Why Most Physician-Built AI Tools Will Fail (And How to Build the Ones That Won't)

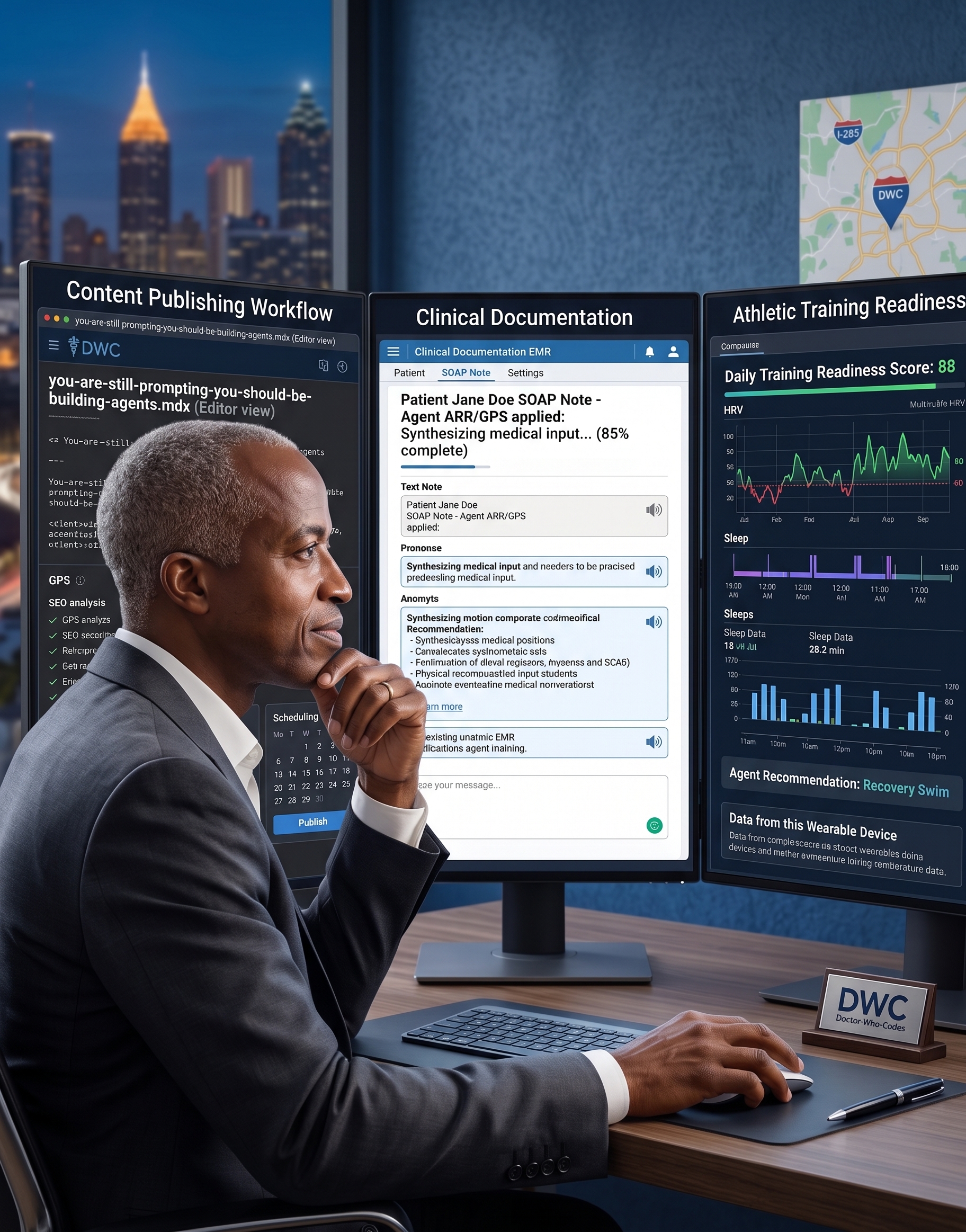

The adoption crisis in clinical AI isn’t technical — it’s generational. Here’s what 18 months building CodeCraftMD taught me about who actually uses physician-built tools.

By Chukwuma Onyeije, MD, FACOG

Medical Director, Atlanta Perinatal Associates | Founder, CodeCraftMD

Building HIPAA-compliant AI tools for real clinical workflows

I spent last Tuesday watching a third-year resident use ChatGPT to draft her clinic notes while her attending physician — fifteen years into practice — manually transcribed the same encounter into Epic.

Both physicians. Same patient. Same documentation burden.

Completely different approaches to solving it.

This moment crystallized something I’ve observed while building CodeCraftMD over the past 18 months: The question isn’t whether physicians will adopt AI tools. It’s which physicians will adopt them first — and why that matters for everyone else.

The Tale of Two Clinical Cultures

Building a medical billing automation platform has given me a front-row seat to how different generations of physicians approach new technology. What I’ve found contradicts most vendor assumptions about clinical AI adoption.

Clinical Veterans: The Domain-Insight Advantage

After 20+ years in maternal-fetal medicine, I can diagnose systemic workflow problems in seconds:

-

The billing code that never captures the actual clinical complexity

-

The EHR click sequence that wastes 90 seconds per patient

-

The handoff protocol that consistently loses critical information

-

The documentation template that forces unnatural clinical reasoning

This pattern recognition is irreplaceable. No software engineer — however talented — can identify these friction points without living inside clinical flow for years.

We experienced physicians know exactly what’s broken. We’ve complained about it in physician lounges for decades.

But here’s our Achilles heel: Most of us trained when medical software was stable, certified, and changed once per decade. The modern AI ecosystem — with its weekly model updates, shifting APIs, and “move fast and break things” ethos — feels antithetical to medical culture.

Our strength: We know which problems actually matter.

Our barrier: We expect stability in a space that rewards rapid iteration.

AI-Native Trainees: The Workflow-Fluency Advantage

Meanwhile, today’s residents and medical students entered training already fluent in:

-

Prompt engineering for clinical reasoning

-

No-code automation for workflow hacks

-

Rapid prototyping of custom tools

-

Iterative experimentation without fear of “breaking” things

They don’t ask “Is this tool approved?” They ask “Does this solve my problem right now?”

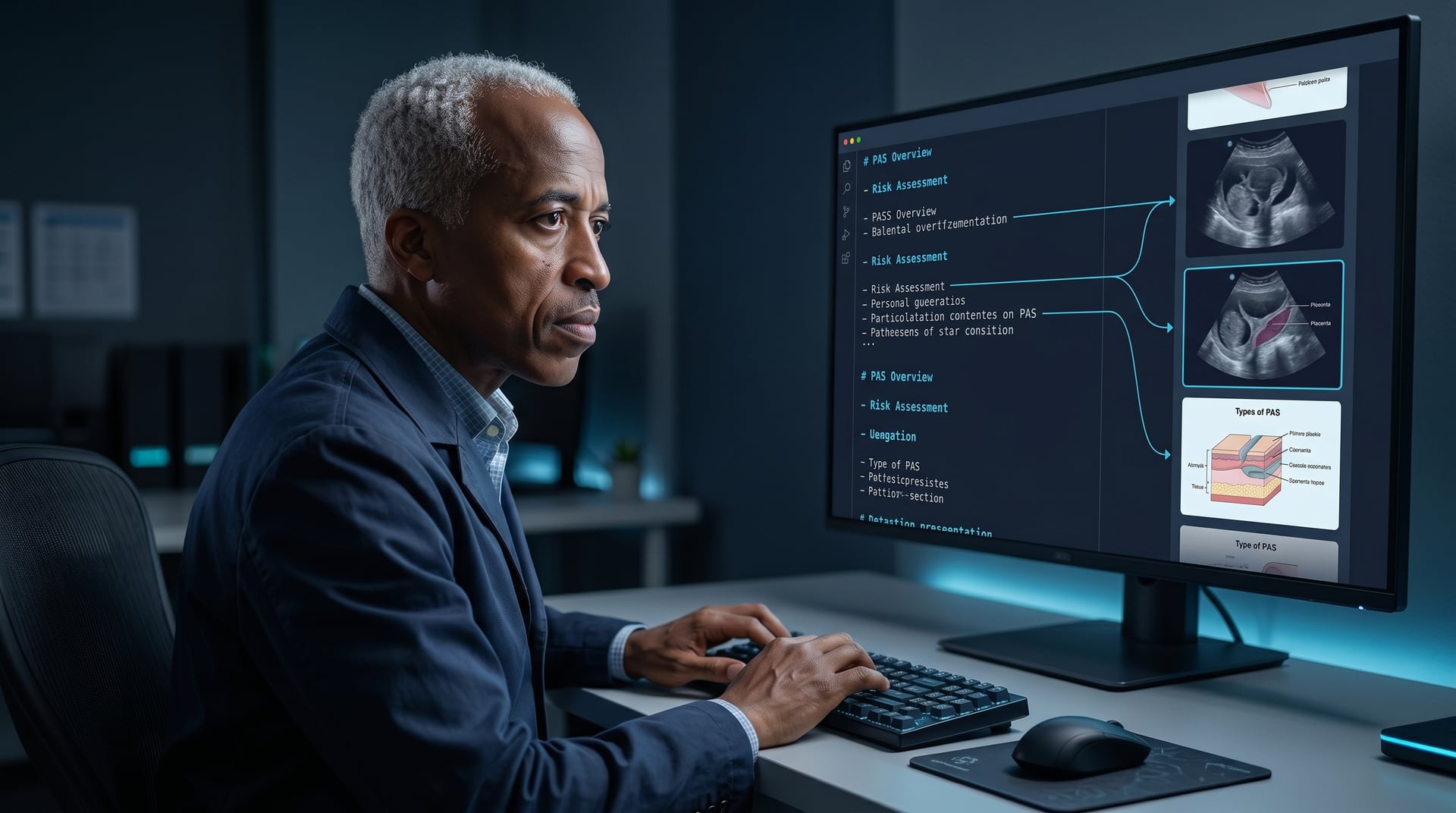

Imagine a resident builds a custom triage tool that auto-prioritizes patient callbacks based on keyword extraction from nursing messages. Technically elegant. Built over a weekend. Saves 30 minutes daily.

Then imagine it flags “patient reports decreased fetal movement at 32 weeks” as low-priority because the message doesn’t contain words like “urgent” or “emergency.”

Any MFM specialist knows that’s a potential sentinel event.

Their strength: Technical fluency meets operational fearlessness.

Their limitation: They haven’t yet attended enough funerals to know which shortcuts kill.

They can build fast — but clinical pattern recognition can’t be compressed into a weekend hackathon.

The Bridge Is the Business Model

Here’s what most clinical AI companies miss: The adoption wedge isn’t veterans OR trainees. It’s veterans THROUGH trainees.

The most successful physician-built tools I’ve seen follow this pattern:

-

Experienced clinician identifies authentic pain point (knows what to build)

-

AI-native trainee prototypes rapid solution (knows how to build)

-

Tool gets stress-tested in real clinical flow (bottom-up adoption)

-

Senior physicians adopt after seeing it work (peer validation)

-

Administration buys in once ROI is obvious (institutional scale)

Innovation in medicine doesn’t flow top-down. It spreads laterally through trusted peers.

This is why CodeCraftMD’s initial pilot is with MFM fellows who code. They have enough clinical depth to recognize real problems, enough technical fluency to iterate solutions, and enough institutional credibility to influence attending adoption.

The Real Question: Who Controls Clinical Software?

Here’s what keeps me up at night:

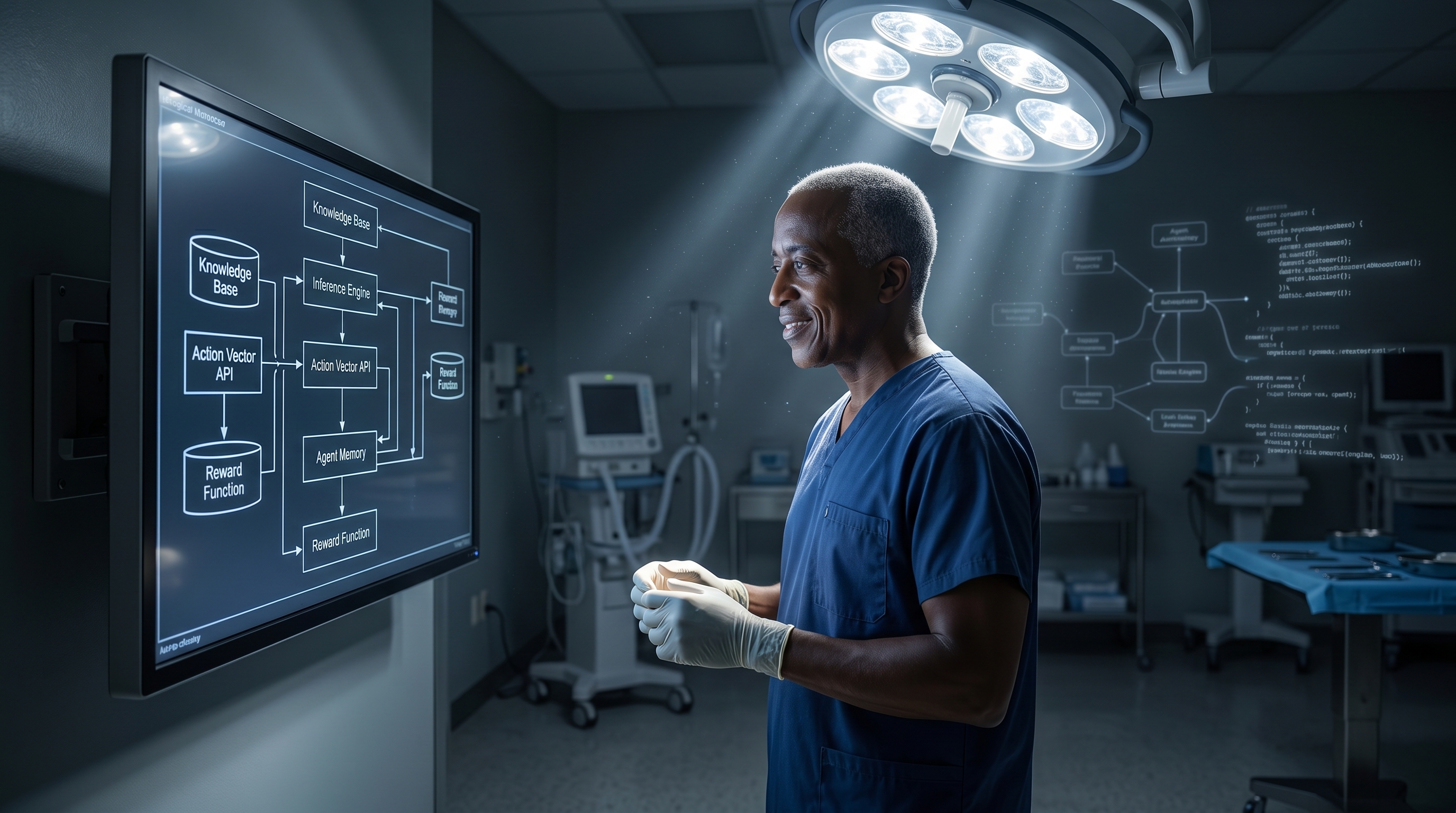

Every specialty will soon have AI-powered cognitive instruments. The question is who builds them.

If physicians don’t participate in this process, we’ll inherit tools designed by people who’ve never:

-

Managed a precipitous breech delivery at 2 AM

-

Explained a devastating diagnosis to a family

-

Made a split-second clinical decision with incomplete information

-

Navigated the cognitive load of 40 patients across three hospitals

Learning to code as a physician isn’t about becoming a software engineer.

It’s about:

-

Preserving clinical epistemology in automated reasoning

-

Ensuring safety constraints are clinically meaningful, not just technically compliant

-

Designing interfaces that augment — not replace — physician judgment

-

Retaining agency over the tools that mediate our practice

The physicians who learn to build now will shape the clinical software ecosystem for the next generation.

The rest will inherit whatever gets built for them.

Three Actions You Can Take This Week

If you’re an experienced clinician frustrated by broken workflows:

Join the next Doctors Who Code workshop where we translate clinical pain points into buildable prototypes. (Next session: February 2026 — [join the waitlist here])

If you’re a trainee already tinkering with AI tools:

Document what you’re building. Your workflow hacks today become tomorrow’s institutional solutions. Share them in the DWC community forum.

If you’re a medical educator shaping next-generation training:

Advocate for “Clinical Software Design” electives in your residency program. The physicians you’re training will spend more time interacting with software than with patients. They need to understand how it’s built.

The future of medicine isn’t physician vs. AI. It’s physician-built AI vs. vendor-built AI.

Which side of that divide do you want to be on?

— Chukwuma Onyeije, MD, FACOG

Writing about physician-built tools, clinical AI, and the future of medical software at DoctorsWhoCode.blog

Frequently Asked Questions

Why do most physician-built AI tools fail to get adopted?

The failure is usually not technical — it is generational. Tools built by experienced physicians often reflect deep clinical insight but lack the technical fluency to iterate quickly. Tools built by AI-native trainees ship fast but may miss clinical pattern recognition that prevents dangerous edge cases. Both alone are insufficient. Successful tools require experienced clinician insight into what to build and AI-native technical fluency in how to build it.

What is the difference between how experienced physicians and AI-native trainees approach clinical software?

Experienced physicians have irreplaceable domain insight: they recognize broken workflows, know where clinical logic fails, and understand which safety constraints actually matter. AI-native trainees have technical fluency and operational fearlessness — they prototype quickly and iterate without hesitation. The risk is that trainees can build something technically elegant with a dangerous clinical blind spot. A triage tool that deprioritizes decreased fetal movement because the message lacks the word 'urgent' is a concrete example of that failure mode.

What is the adoption pattern for clinical tools that actually succeed?

Successful clinical tools spread laterally through trusted peers, not top-down from administration. The sequence: an experienced clinician identifies an authentic pain point, an AI-native trainee builds and iterates the prototype, the tool gets stress-tested in real clinical flow, senior physicians adopt after seeing it work in practice, and administration buys in once the ROI is visible. Innovation in medicine doesn't flow from the top — it propagates through peer trust.

Why should physicians build AI tools rather than waiting for enterprise vendors?

Vendor teams building clinical AI have not managed a precipitous delivery at 2 AM, explained a devastating diagnosis to a family, or made a split-second decision with incomplete information. Those experiences encode safety constraints, clinical epistemology, and interface requirements that no product specification document can capture. Physicians who build now preserve clinical reasoning in automated systems. Those who wait inherit tools built to someone else's priorities.

What is the generational divide in clinical AI adoption and why does it matter?

Experienced physicians (10+ years in practice) approach new software expecting stability, rigorous validation, and institutional approval. AI-native trainees expect tools to work immediately and are comfortable iterating without formal sign-off. This creates a gap: experienced physicians know which problems matter most but resist early adoption; trainees adopt quickly but may underweight clinical safety edge cases. Bridging this gap — through co-development between generations — is the structural opportunity in physician-built AI.

Next post: Inside CodeCraftMD — Why I built billing automation first (and what it taught me about clinical AI adoption)

Related Posts