The Human Bottleneck: Why Medicine's Agentic Future Is Waiting on Us

AI agents are 50x faster than humans, but medicine only captures a fraction of that speed. The real bottleneck in agentic AI is not the technology — it is human capacity, clinical oversight, and workflows built for the wrong consumer. A physician-developer's perspective.

Listen to this post

The Human Bottleneck: Why Medicine's Agentic Future Is Waiting on Us

If you spend enough time around tech executives discussing artificial intelligence, you notice a pattern. The conversation is always about the model. How many parameters. How fast the inference. How much compute. The implicit assumption is that once the technology is powerful enough, everything else will fall into place.

I want to challenge that assumption directly. Because after watching a recent analysis of where agentic AI is actually headed — and after spending years building clinical software as a practicing Maternal-Fetal Medicine specialist — I am convinced that the technology is not the bottleneck.

We are.

What the Speed Numbers Actually Mean

Here is a claim worth sitting with: AI agents can operate roughly 50 times faster than a human completing the same task. That number comes from productivity benchmarking work that has been quietly circulating in enterprise AI circles over the last eighteen months. The implication is staggering. In theory, a single well-designed agent could compress a week of knowledge work into hours.

In practice, that compression almost never happens. Not because the model is slow. Because the human is slow.

Every agentic workflow has what engineers call a rate-limiting step — the node in the pipeline that determines how fast the whole system can move. In software development, in legal research, in financial analysis, early adopters are discovering the same uncomfortable truth: the rate-limiting step is the human in the loop. The approver. The reviewer. The person who has to read the output before it ships.

Medicine has the same problem. And medicine may have it worse.

The EHR Was Not Built for This

Let me describe a workflow that happens hundreds of times a day at high-volume MFM practices like mine. A patient is referred for elevated risk. A consultation note needs to be generated. That note has to capture the clinical reasoning, the ultrasound findings, the diagnostic codes, the management plan, and the billing justification — all in a format that satisfies five or six different downstream consumers simultaneously: the referring provider, the hospital system, the payer, the quality metrics auditor, the patient, and potentially a malpractice attorney reviewing the record three years from now.

A skilled physician can do this in about twenty minutes if nothing interrupts them. An AI agent, given the right inputs and a well-designed prompt, can produce a structured draft in under sixty seconds.

That sixty-second draft still requires a physician to review it, modify it, sign it, and reconcile it with the EHR’s documentation architecture — which was designed in the early 2000s, optimized for billing compliance, and never imagined that the notes inside it would one day be generated by a language model.

The bottleneck is not the sixty seconds. The bottleneck is everything that happens after.

Three Places We Are Slowing Ourselves Down

I have built enough clinical AI tools now — through CodeCraftMD and my own practice workflows — to have some opinions about where the friction actually lives. It is not one problem. It is at least three.

The oversight problem. Medicine requires human judgment at the point of clinical decision. That is not bureaucratic conservatism — it is epistemologically correct. An AI agent does not carry malpractice liability. It cannot be deposed. It cannot feel the weight of a conversation with a family at 23 weeks of gestation. The physician has to be in the loop. But our current workflows treat oversight as an afterthought rather than a design primitive. We bolt the human onto the end of the AI process and wonder why everything feels slower than it should.

The interface problem. Most EHR systems were built for a world where the physician was both the generator and the consumer of clinical documentation. The physician typed; the physician read. Agentic AI breaks that assumption completely. The agent generates; the physician reviews. That is a fundamentally different cognitive task — and our interfaces were not designed for it. We are reviewing AI-generated notes through the same screen architecture we used to type those notes from scratch. It is like being asked to proofread a novel through a typewriter window.

The trust calibration problem. Physicians are trained to be appropriately skeptical. We are also, frankly, trained to be possessive of our documentation. The note is not just a record — it is an artifact of clinical reasoning. Handing that to an agent, even a very good one, requires a form of professional trust that most of us have not been taught to calibrate. So we over-review. We correct things that did not need correcting. We re-write sentences that were accurate. We spend forty-five minutes reviewing a document that should have taken five. This is not irrationality. It is a reasonable response to a new tool we do not yet fully understand.

The Workflow Was Built for the Wrong Consumer

Here is the framing that has reorganized how I think about all of this.

The current clinical documentation workflow was designed with the physician as its primary input device. The physician sees the patient, thinks the thoughts, and types the record. Every piece of the infrastructure — the EHR templates, the billing codes, the audit requirements — assumes that the physician is the originator of the information.

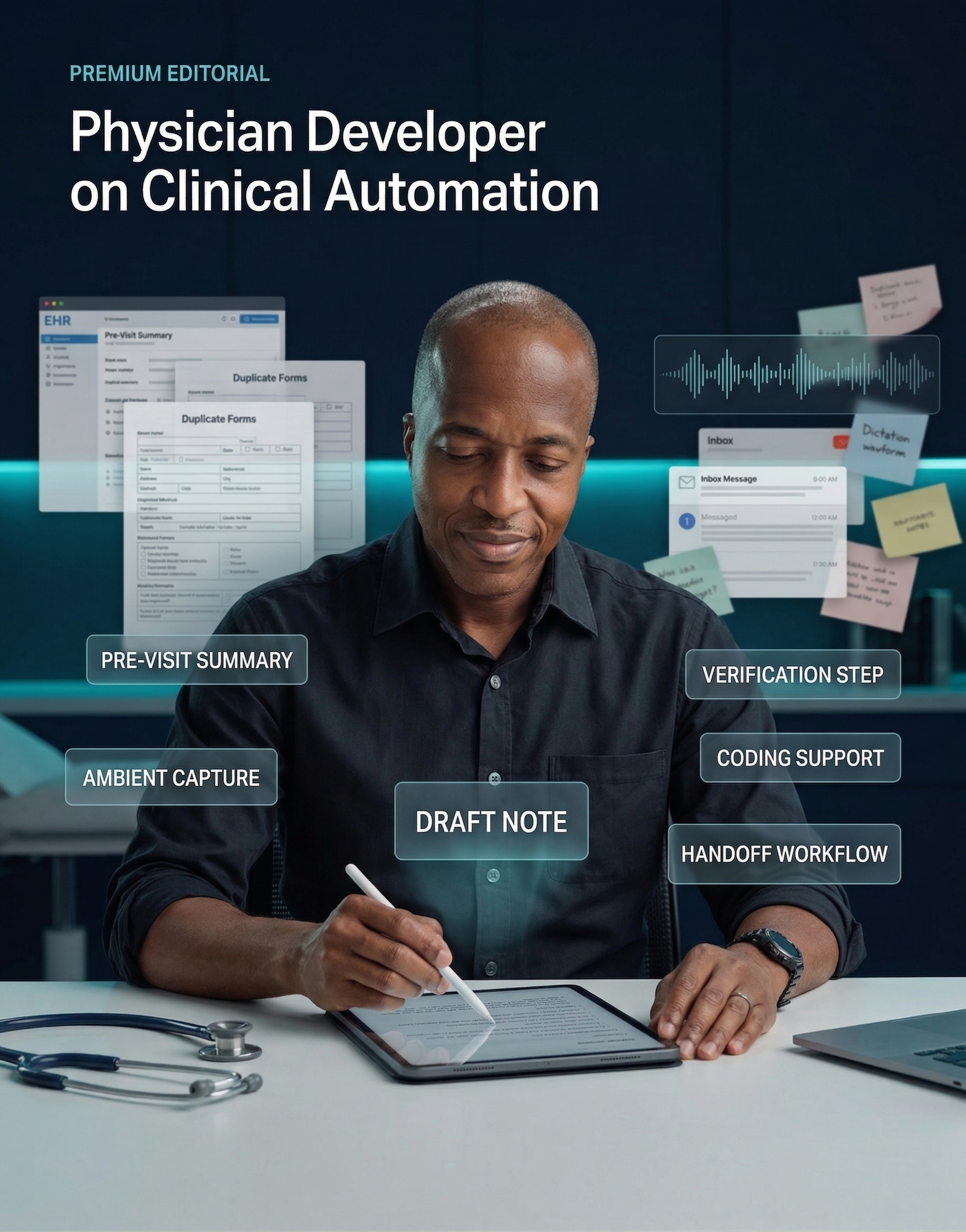

Agentic AI inverts this. In an agentic workflow, the physician is not the originator. The physician is the validator. The agent generates. The physician approves, refines, and signs. That is a completely different role. And we have built almost nothing to support it.

The charting interface is wrong. The review process is wrong. The training is wrong. The liability framework is wrong. The regulatory guidance is, with some notable exceptions, not yet written.

This is the gap that actually matters. Not model capability. Not inference speed. Not context windows. The gap between what agentic AI can do and what medicine can absorb is a gap in human infrastructure — in workflows, interfaces, training, and institutional readiness.

What I Am Doing About It

I am not writing this as an outside observer. I am in the middle of building against this problem every week.

CodeCraftMD started as a personal solution to a personal problem: I was spending too much time on documentation and not enough time on patients. The tools I built for my own practice have become a laboratory for understanding exactly where human workflows break down in the presence of AI assistance. The signout-to-APSO pipeline. The referral letter generator. The prescription workflow. Each one has taught me something different about where physicians get stuck — not because the AI failed, but because the human infrastructure around the AI was never designed to receive it.

The lesson I keep relearning is this: the bottleneck is not technical. It is organizational. It is cultural. It is the gap between what an agent can produce and what a physician is currently equipped to verify, trust, and integrate into their clinical identity.

Closing that gap is harder than training a model. It requires changing how physicians are trained, how EHRs are designed, how liability is assigned, and how clinical culture thinks about the relationship between human judgment and machine output.

It is also, I would argue, the most important engineering problem in medicine right now.

The Honest Assessment

The agentic future of medicine is not waiting on a better model. The models are already good enough to transform documentation, prior authorization, clinical decision support, and population health analytics. They have been good enough for longer than most people realize.

What the agentic future of medicine is waiting on is us.

It is waiting on EHR vendors to rebuild their interfaces for reviewers, not just authors. It is waiting on health systems to redesign oversight workflows that are fast enough to let agents actually accelerate care. It is waiting on physicians to develop a calibrated relationship with AI output — not blind trust, not reflexive skepticism, but disciplined, efficient verification.

And it is waiting on physician-developers — people who understand both the clinical reality and the technical architecture — to build the missing infrastructure layer that connects what agents can do to what medicine can actually use.

That is the work. Not the models.

The models are ready. The question is whether we are.

Dr. Chukwuma Onyeije is a Maternal-Fetal Medicine specialist and Medical Director at Atlanta Perinatal Associates. He writes about clinical AI, physician-developer practice, and the infrastructure of medical intelligence at DoctorsWhoCode.blog. He is the founder of CodeCraftMD and OpenMFM.org.

Related Posts