Dead Weight: What a 1970 Cardiology Textbook Taught Me About the Future of Clinical Evidence

A half-century-old textbook on congenital heart disease. A chapter on Ebstein's anomaly. A comparison that changed how I think about evidence, AI, and what we owe the next generation of physicians.

Listen to this post

Dead Weight: What a 1970 Cardiology Textbook Taught Me About the Future of Clinical Evidence

I. The Giveaway Bin

The Emory University Hospital library was giving away books.

Free. First come, first served. The kind of institutional shedding that happens when a library runs out of shelf space and decides that older texts are liabilities rather than assets.

I almost walked past the table. I am a maternal-fetal medicine specialist. Cardiology is not my domain. But one spine caught my eye: The Clinical Recognition of Congenital Heart Disease by Joseph K. Perloff, first published in 1970. The cover was unremarkable. The binding was cracked. I picked it up the way you pick up a smooth rock at the beach, not because you have a plan for it, but because something in your hand says wait.

I took it home.

That night I opened to the chapter on Ebstein’s anomaly. I expected to be amused. What I found instead stopped me cold.

II. June, 1864

Perloff opens his chapter on Ebstein’s anomaly with a case history that reads more like a detective story than a textbook entry.

In June of 1864, a 19-year-old laborer was admitted to the All-Saints Hospital in Breslau. He died ten days later. Wilhelm Ebstein, the assistant physician and prosector, documented the clinical course and the autopsy findings in a paper he titled “On a Very Rare Case of Insufficiency of the Tricuspid Valve Caused by a Severe Congenital Malformation of the Same.”

That was 1866.

The next major milestone came in 1937, when Yater and Shapiro reported the first case in which radiologic and electrocardiographic data were available. In 1949, Tourniaire, Deyrieux, and Tartulier diagnosed the condition in a living patient for the first time, exactly 83 years after Ebstein’s original description. The first English-language accounts of cases diagnosed during life appeared in 1950 and 1951. One of those patients died six years later at age 40, and the clinical diagnosis was confirmed at autopsy.

Read that timeline again. Eighty-three years between the original description and the first living diagnosis.

The disease was real the entire time. The evidence was incomplete. The tools did not yet exist to close the gap.

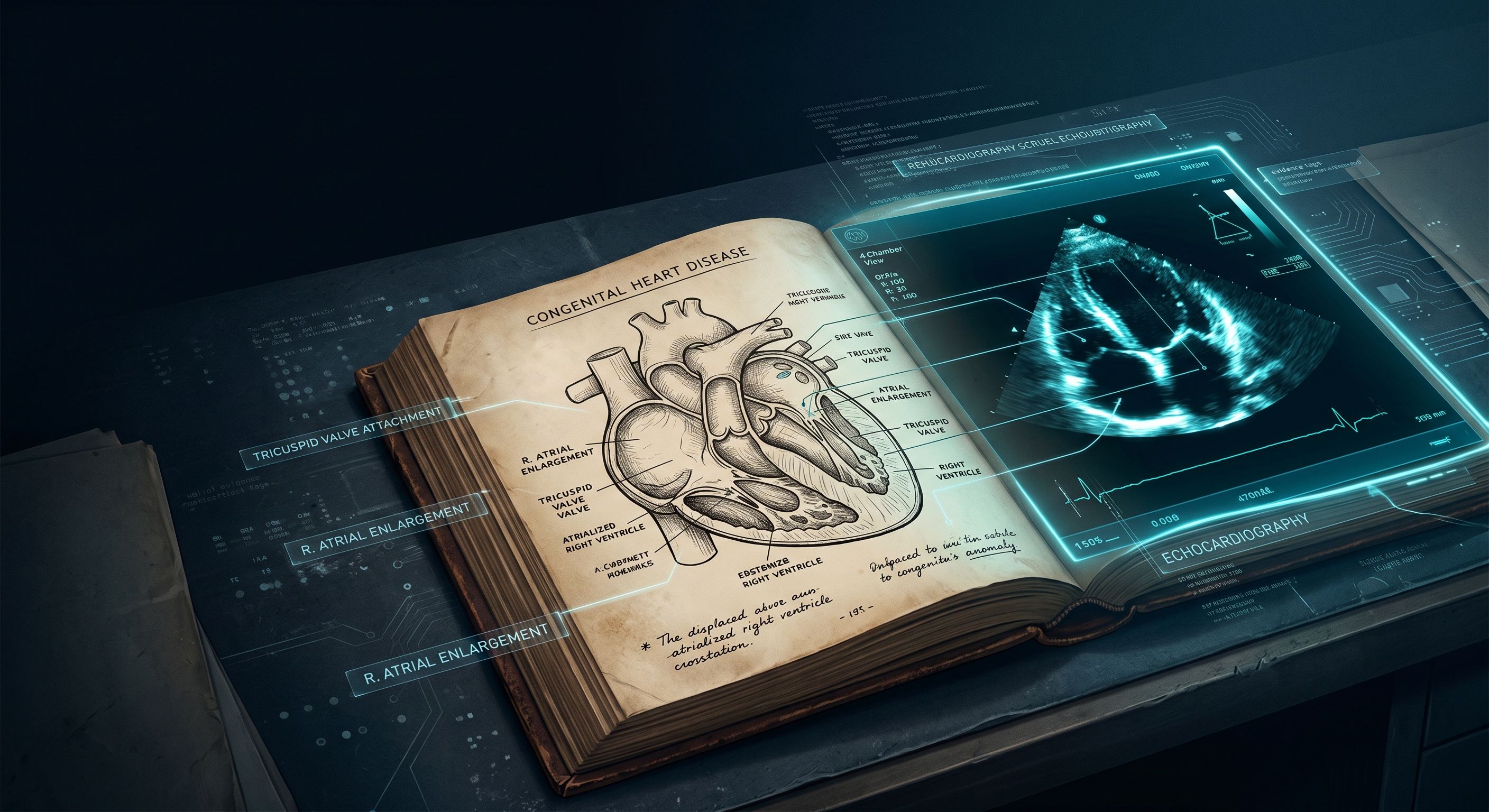

Perloff then turns to the anatomy, drawing directly on Ebstein’s own words. The basic anatomic fault, he writes, consists of displacement of fused, malformed portions of tricuspid valvular tissue into the right ventricular cavity. The leaflets attach in part to the tricuspid annulus and in part below it. Valvular tissue may be directly adherent to the ventricular endocardium or tethered to the ventricular wall by multiple, anomalous, short chordae tendineae. Ebstein himself recognized that “the chordae tendineae were attached mainly to the endocardium.”

The portion of the right ventricle underlying the adherent valvular tissue is thin and functions as a receiving chamber analogous to the right atrium. It registers a right atrial pressure pulse. This segment of right ventricle is therefore said to be “atrialized.”

That description is from 1970. It is also from 2026.

It has not changed.

Reading those pages, a specific word kept surfacing in my mind.

Bedrock.

The 1970 anatomical framework was not approximate. It was not a placeholder pending better technology. It was correct. The authors were working with what they had: physical examination, electrocardiography, chest radiography, cardiac catheterization. What they produced from those tools was a precise, durable account of a structural cardiac defect that echocardiography would later confirm in exquisite detail.

Some of the diagnostic criteria were incomplete. Some were imprecise in ways we can now measure. But the underlying anatomy was right.

That matters enormously. I will explain why.

III. Ebstein’s Anomaly, 2026

The current diagnostic standard for Ebstein’s anomaly is transthoracic echocardiography.

Modern criteria define the condition by the degree of apical displacement of the septal tricuspid leaflet, indexed to body surface area. A displacement index greater than 8 mm/m² is the primary diagnostic threshold. Echocardiography characterizes leaflet tethering, the size and function of the atrialized right ventricle, the degree of tricuspid regurgitation, and associated lesions: atrial septal defects, accessory conduction pathways, left ventricular non-compaction.

Perloff’s 1970 illustration of the atrialized right ventricle, a hand-drawn cross-section showing the displaced tricuspid leaflet and the pressure tracings at each anatomical zone, is, structurally speaking, the same image that echocardiography now renders in real time. The anatomy he drew by hand is the anatomy we measure by ultrasound. The “atrialized” segment he described registers exactly the pressure pattern his text predicted.

As a maternal-fetal medicine specialist, I see this condition before birth. We can now identify Ebstein’s anomaly by fetal echocardiography as early as 18 to 22 weeks of gestation. Severe fetal Ebstein’s carries a mortality rate approaching 50% when accompanied by hydrops. We counsel families, coordinate delivery planning, and have neonatal cardiac surgery teams notified before the infant takes a first breath.

The 1970 physician had none of this. No real-time imaging. No fetal surveillance. No indexed displacement criteria. No prenatal diagnosis at all.

Here is what Perloff did have: a correct anatomical map, a logical clinical framework, and enough intellectual precision to know where the edges of his knowledge were.

He was doing exactly what we are doing. He was doing it without the instruments.

The echocardiogram did not change the question. It changed the resolution.

That is a distinction worth holding onto.

IV. The Half-Life of Clinical Evidence

Here is the problem.

Not all evidence ages at the same rate. Some clinical truths are durable: the anatomical basis of Ebstein’s anomaly, as described in 1970, is still correct. The tricuspid valve is still displaced. The right ventricle is still functionally reduced. The hemodynamic consequences are still what they are. This is bedrock knowledge. It has a long half-life.

Other clinical evidence is provisional: the specific thresholds, the risk stratification schemes, the surgical timing criteria. These are calibrated against the technology of their moment. They have a shorter half-life. They require revision as instruments improve.

The crisis in clinical medicine is not that we lack evidence. We have more evidence than any generation of physicians in history. The crisis is that we cannot reliably distinguish long-half-life evidence from short-half-life evidence. We cannot tell, in the press of a clinical encounter, which parts of what we know are bedrock and which parts are sand.

The 1970 textbook is not a relic. It is a calibration tool. Reading it tells you exactly where the bedrock is.

The anatomical description of Ebstein’s anomaly has not changed in more than fifty years. That is important clinical information.

The diagnostic criteria have changed substantially. That is also important clinical information.

The textbook that gets given away for free contains both.

V. The Distribution Problem

Here is a harder truth.

The 1970 physicians were not wrong. They were inaccessible.

Their best work was locked in a physical object on a physical shelf in a physical building. It traveled poorly. It updated slowly. It required physical proximity to access and hours of reading to extract. When new evidence arrived, there was no mechanism to push it to the clinician at the bedside. When old evidence proved durable, there was no mechanism to distinguish it from the evidence that had expired.

This is the distribution problem. And it has not been solved by the internet.

We now have more access to medical literature than at any point in history. PubMed indexes over 35 million citations. UpToDate hosts summaries of thousands of clinical topics. Clinical practice guidelines are published by every major specialty society on a rolling basis.

And physicians are still practicing on evidence that is years or decades out of date.

Not because they are lazy. Because the friction is too high. Because there is too much signal and too little infrastructure to separate it. Because pulling the right evidence at the right moment in the right clinical context requires time that a busy practitioner does not have.

The distribution problem is not a knowledge problem. It is an infrastructure problem.

VI. What Large Language Models Actually Do

This is where I want to slow down, because the conversation about LLMs in medicine tends to collapse into two opposing positions that are both wrong.

Position one: LLMs are going to replace clinical judgment. They will diagnose everything. The physician is obsolete.

Position two: LLMs hallucinate, therefore they are dangerous, therefore physicians should not use them for anything important.

Both positions miss the point.

LLMs are evidence navigators. They are not evidence generators.

A well-architected clinical LLM does not make things up and present them as fact. It retrieves, synthesizes, and surfaces existing evidence in response to a clinical query. The quality of that output depends entirely on the quality of the corpus it is drawing from and the architecture that governs retrieval.

This is precisely what a platform like EvidenceMD is designed to do. Rather than asking a general-purpose model to recall clinical facts from its training data, which is where hallucination risk is highest, a grounded retrieval architecture queries a curated, citable evidence base and returns answers that are traceable to source documents.

Think about what that means in practice.

A physician in the middle of a clinical encounter has a question about the current diagnostic criteria for Ebstein’s anomaly. She does not have time to open PubMed. She does not have time to navigate to the AHA/ACC guidelines. She has forty-five seconds.

An LLM-powered evidence tool can return a synthesized, cited answer in that window. The physician gets the 2026 diagnostic criteria, sourced to the relevant guideline, in the time it used to take to open a browser tab.

That is not artificial intelligence replacing clinical judgment. That is infrastructure finally catching up to the volume of evidence we have generated.

VII. Old Books Are a Feature

I want to make an argument that will sound counterintuitive.

We should be reading old medical textbooks. Systematically. Deliberately. Not for nostalgia. For calibration.

Here is the method. Take a clinical entity you manage regularly. Find the oldest rigorous text on it you can. Read the diagnostic criteria. Then pull the current criteria. Then ask three questions:

What has not changed? This is your bedrock. This is the evidence with the long half-life. Treat it as highly durable.

What has changed, and why? This tells you which tools drove the revision. It tells you where the next revision is likely to come from.

What has changed in ways that the new criteria obscure? Sometimes progress is not clean. Sometimes the precision of new tools crowds out clinical wisdom that the older framework preserved. The 1970 clinician who had to make a diagnosis without echocardiography had to develop a clinical pattern-recognition skill that we are at risk of losing.

LLMs can help with all three questions. A tool like EvidenceMD can surface both the historical and current literature, allowing the physician to perform this kind of comparative analysis without spending a weekend in the library.

The 1970 textbook became more valuable to me after I had access to the 2026 evidence. Not less.

Dead weight. That is what the library called it.

I disagree.

VIII. What We Owe 2076

I am a physician in 2026. I have access to fetal echocardiography, genomic sequencing, AI-assisted diagnostic tools, and a global literature database that would have been inconceivable to the authors of that 1970 textbook.

What I produce with those tools will, if it is worth anything, still be worth something in 2076.

The question is whether it will be findable.

The 1970 textbook was findable because someone printed it, bound it, and shelved it. It survived. It landed in a giveaway bin fifty years later and a physician picked it up and read it and found it useful.

The clinical evidence we are producing right now is scattered across a thousand journals, a hundred preprint servers, a dozen guideline documents, and an unknowable number of conference presentations. It is more voluminous than anything the 1970 physicians could have imagined. It is also, in some ways, less durable.

Evidence infrastructure is not a luxury. It is an obligation.

Building tools that make evidence findable, navigable, and citable at the point of care is not an optional feature of modern medicine. It is what we owe to the patients who will be cared for by the physicians who come after us.

The physician who picks up our best work in 2076 should not find it in a giveaway bin.

She should find it in thirty seconds.

Chukwuma Onyeije, MD, FACOG is a Maternal-Fetal Medicine specialist, Medical Director at Atlanta Perinatal Associates, and the founder of CodeCraftMD and OpenMFM.org. He writes at DoctorsWhoCode.blog about building clinical tools at the intersection of medicine and software.

Related Posts