The Thinking Partner Commands Every Physician Should Build

Slash commands that replace the morning literature review. /challenge, /emerge, /connect, /close-clinic, /graduate: clinical reasoning augmentation, not automation.

Listen to this post

The Thinking Partner Commands Every Physician Should Build

By now you have a vault full of compiled clinical knowledge. You have your institutional protocols, your evidence summaries, your curated literature.

Now comes the part that actually changes how you practice: turning that vault into a thinking partner.

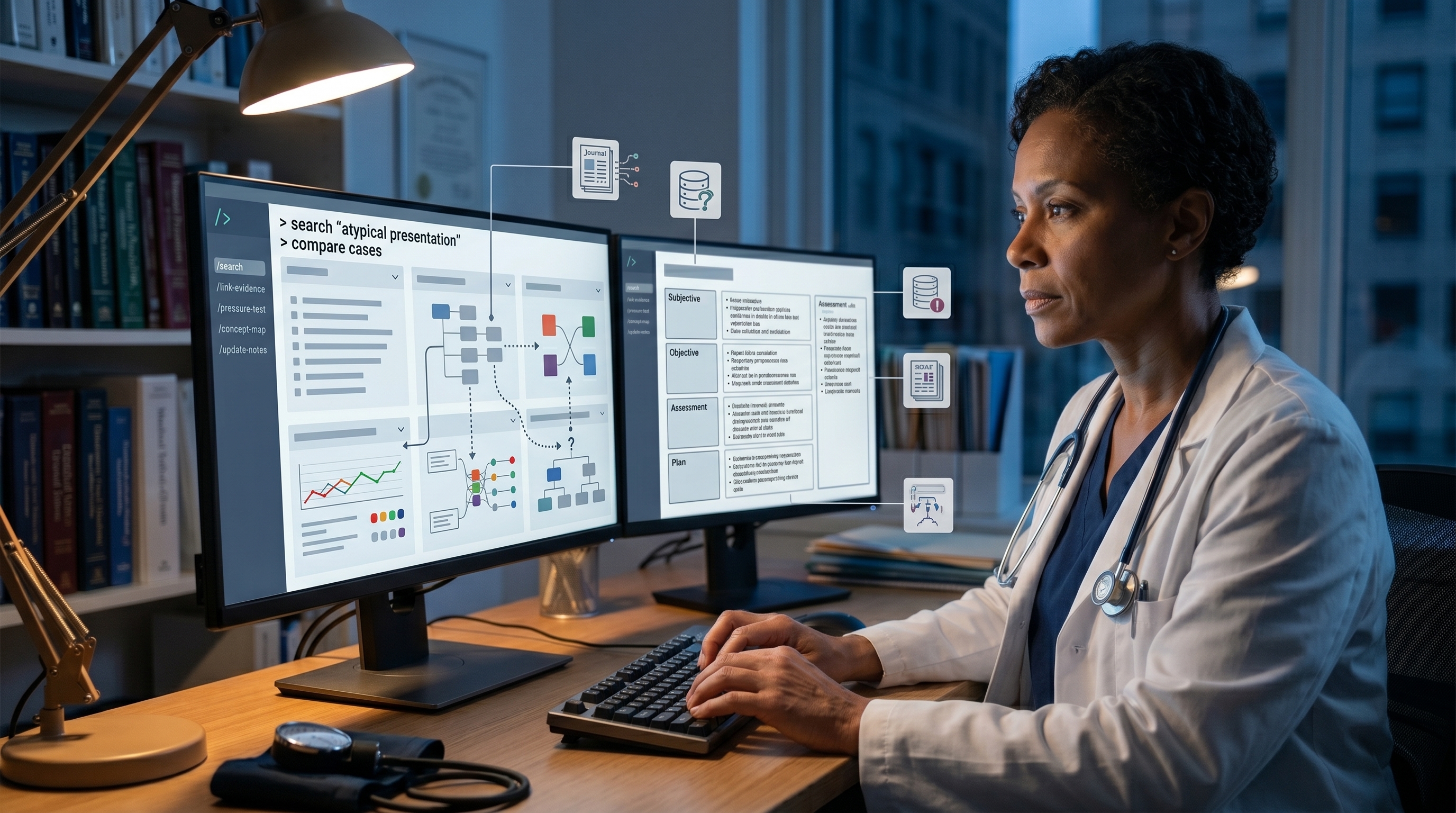

Not a scribe. Not a documentation tool. A genuine diagnostic and reasoning collaborator.

The mechanism is slash commands. Simple text triggers that invoke sophisticated thinking routines against your vault. The difference between /challenge and asking an LLM the same question is profound: one stays in your vault, one knows your practice.

/challenge: Diagnostic Pressure Testing

You have a patient. You have a working diagnosis. You have already committed to a management plan.

You type into your vault: /challenge 34-week G2P1 with severe asymmetric FGR, EFW 1,600g, Doppler with absent end-diastolic velocity, AFI 4cm, BP 148/92. Working diagnosis: severe FGR with failed intervention, delivery indicated. Challenge this.

What happens next:

The AI retrieves your AEDV entity page, your periviability protocol, your preeclampsia risk assessment, your neonatal outcome data by gestational age. It reads your actual reasoning from the case notes in your human vault.

Then it does not agree with you.

It surfaces: three cases in your vault where you initially thought delivery was indicated but delayed 24 hours and the clinical picture changed. Literature flagging that maternal BP 148/92 at 34 weeks is not hypertensive emergency if proteinuria is absent. A decision tree from SMFM that separates “failed intervention” (which you met) from “true indication for delivery” (which may not be clearly met yet).

It does not tell you what to do. It tells you what you might be missing. It surfaces your own prior reasoning on similar cases. It flags where your current decision deviates from your own patterns.

This is not second-guessing. This is pressure-testing.

/emerge: Surfacing Buried Insights

You have been managing a patient for months. You have notes scattered across your vault. You never quite articulated the pattern.

You type: /emerge 28-year-old with recurrent IUFD. Three consecutive pregnancies. Negative workup for antiphospholipid, negative for thrombophilia, cytomegalovirus IgG positive. Something is connecting these cases.

The AI reads all your IUFD case notes. It reads your vault on infectious etiologies of fetal loss, on CMV in pregnancy, on recurrent pregnancy loss algorithms.

Then it suggests: three of your case notes mention cytomegalovirus. One mentions that the patient had no immune workup. One mentions she had a transfusion in her 20s. The vault data on CMV reactivation in pregnant women with prior exposure but uncertain immunity status is sparse in your compiled material.

The insight was always there. You never assembled it.

/connect: Cross-Domain Hypothesis Generation

You have a complex patient. The clinical picture does not fit any one diagnosis cleanly.

You type: /connect 31-week G3P0 with sudden-onset oligohydramnios AFI 3cm. Preeclampsia labs normal. Fetal biophysical profile normal. No infection markers. Fetal echo normal. Why would amniotic fluid suddenly decrease at 31 weeks with otherwise reassuring testing?

The AI does not have a list of diagnoses. It generates hypotheses by connecting across domains:

- Pathophysiology: Sudden oligohydramnios without obvious cause could reflect renal or cardiac dysfunction the standard biophysical profile does not catch

- Your institutional cases: One case of sudden oligohydramnios preceded fetal cardiomyopathy diagnosis by 48 hours

- Literature: Your vault contains a review of non-immune hydrops that mentions oligohydramnios as prodrome

- Pharmacology: Patient was on an ACE inhibitor for hypertension; vault contains literature on ACE inhibitor effects on fetal renal function

The command does not give you the answer. It helps you hypothesize where the answer might live. Then you go looking.

/close-clinic: End-of-Day SOAP Formatting

You have seen 15 patients. Your notes are fragmented: a few full documentation, several one-liners, several incomplete thoughts you intended to return to.

You type: /close-clinic at the end of the day.

The AI reads all your daily scratchpad notes. For each one, it reconstructs the probable assessment and plan from:

- Your vault (what does your protocol say about this presentation?)

- Your historical notes (how have you managed similar cases?)

- Your reasoning (what were you circling in the one-liner?)

It outputs a structured SOAP template for each case. Not completed. But scaffolded. The subjective and objective are there. The assessment and plan have a framework.

You review. You edit. You finalize. The documentation tax drops.

This is not magic. This is your vault knowing your patterns well enough to predict the structure you are building.

/graduate: Elevating Cases to Concepts

You have managed a fascinating case. You have synthesized your reasoning in your human vault. You have a strong sense that this case contains generalizable knowledge.

You type: /graduate bleeding-risk-case-032605 (the date-stamped case note in your human vault).

The AI reads the case. It identifies:

- What was clinically unusual about this case

- What decision points were non-obvious

- What changed your management approach

- Where your reasoning deviated from standard algorithms

- What the patient outcome teaches

It then drafts a candidate entity page. Example:

Entity: Placental Abruption with Delayed Presentation in the Setting of Chronic Hypertension

Sources: Your case 032605, ACOG guideline 2019, NEJM trial 2021 Evidence hierarchy: Institutional practice (1 case) + RCT backing dissemination strategy Key points: [structured synthesis] Decision tree: [when to manage expectantly vs. deliver] Institutional note: [This differs from general ACOG because…]

You review. You edit. You decide: does this graduate to the AI Vault, or does it stay in your human vault?

If it graduates, it is now available for all future queries. A future case that reminds you of this one automatically surfaces it.

Why This Works

These commands work because they operate on your vault, not generic models.

When you run /challenge, the reasoning engine knows:

- Your actual protocols

- Your actual case management patterns

- Where you have changed your mind and why

- Your patient population specifics

- Your risk tolerance and practice context

A generic AI cannot do this. It can do debate and Socratic method, sure. But it cannot pressure-test your reasoning against your actual practice.

Implementation: Claude Code as the Command Layer

Each command is a Claude Code custom instruction plus a specific prompt.

# Slash Command: /challenge

Trigger: @challenge [clinical presentation]

Behavior:

1. Retrieve the relevant entity pages from the vault (based on key clinical features)

2. Retrieve the physician's historical reasoning on similar cases

3. Look for contradictions between current reasoning and prior patterns

4. Look for contradictions between current reasoning and entity page guidance

5. Surface 2-3 specific points where the reasoning might be incomplete

6. Do NOT tell the physician what to do

7. Surface the reasoning process and the contradictions

Output format:

## Your challenge:

[clinical presentation]

## Pressure-test findings:

[3 specific points where reasoning might be incomplete]

## Your prior cases that inform this:

[links to vault cases with similar decision points]

## Entity pages consulted:

[relevant entity pages]

## Questions for you:

[3 Socratic questions that highlight the gaps]Each command follows this pattern. It retrieves context from the vault, applies a specific reasoning framework, and surfaces what the generic model would miss because it does not know your practice.

The Flywheel Effect

After six months of using these commands, something shifts.

You notice that /challenge catches errors before you commit to them. /emerge surfaces patterns you never knew you had. /close-clinic saves an hour a day on documentation.

More importantly: you notice that the commands get smarter.

Early on, /challenge surfaces general pushback. After six months of ingests, it knows your specific risk thresholds, your institutional protocols, your practice biases. It surfaces pressure-testing that is actually relevant to you, not generic second opinions.

This is the compounding effect of your vault.

Key Takeaways

- Slash commands are thinking-partner operations, not automation

/challengepressure-tests your reasoning against your vault and patterns/emergesurfaces patterns buried in your case history/connectgenerates cross-domain hypotheses/close-clinicscaffolds end-of-day documentation/graduateelevates cases to generalizable clinical knowledge- All commands operate on your vault, not generic models

- Effectiveness compounds as your vault grows

Series Navigation

← Post 3: The Physician’s Stack | Post 5: Building the Knowledge Flywheel →

Related Posts