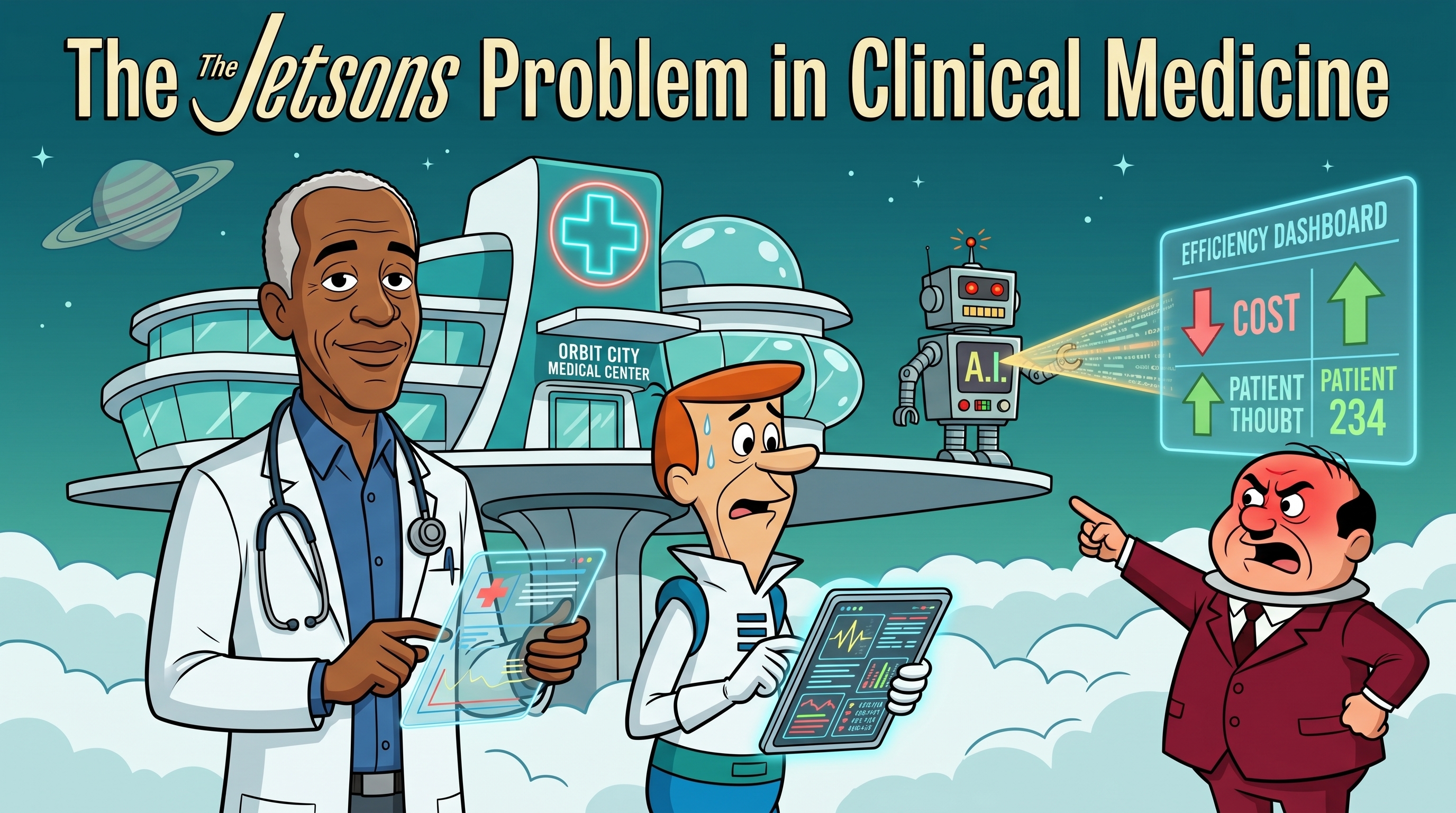

The Jetsons Problem in Clinical Medicine

Artificial intelligence in clinical medicine promises abundance. The real risk is not too little data, but too little agency, attention, and equity.

Listen to this post

The Jetsons Problem in Clinical Medicine

Artificial intelligence in clinical medicine does not fail when the models are weak. It fails when abundance is handed to systems still optimized for control.

George Jetson had a robot maid, a flying car, and a button that did most of his work.

He also had a boss who could make his life miserable on demand.

That is the wrong future for medicine.

When people talk about artificial intelligence in clinical medicine, they usually talk as if more automation will naturally produce more freedom, more time, and better care. The framing is wrong.

Technology can create abundance. It does not automatically redistribute power. If the architecture stays the same, the machine just makes the old hierarchy faster.

That is the Jetsons problem.

Post-Scarcity Never Solved the Power Problem

Post-scarcity stories are often misread as stories about comfort. They are really stories about control.

Iain M. Banks imagined a civilization where material need had largely disappeared. Cory Doctorow imagined a world where reputation became the new scarce resource. E.M. Forster, writing long before modern computing, imagined a society so dependent on its machine that it forgot how to live without it.

Different worlds. Same warning.

Scarcity does not vanish. It relocates.

If food becomes abundant, status becomes scarce. If labor becomes automated, attention becomes scarce. If information becomes cheap, judgment becomes more valuable and more contested.

Medicine is walking directly into that pattern.

We are being told that clinical AI will give us post-scarcity medicine: infinite drafts, infinite summaries, infinite coding suggestions, infinite triage support, infinite documentation help.

Maybe.

But abundance of output is not the same thing as abundance of agency.

What the Jetsons Problem Looks Like in Clinical AI

1. More Output Is Not More Judgment

AI can generate differentials, risk scores, note drafts, imaging summaries, patient messages, and utilization recommendations at a speed no human can match.

That matters. It is also not the hardest part of medicine.

The hardest moments in clinical care are still the moments where a physician has to hold uncertainty, context, and moral consequence at the same time. A conversation about a severe fetal anomaly. A goals-of-care discussion. A limits-of-viability counseling session at 23 weeks. The machine can organize information around those moments. It cannot carry them.

When people confuse output abundance with clinical wisdom, they mistake throughput for judgment.

Those are not the same thing.

2. The Tool Serves Its Owner

This is the part too many clinical AI conversations politely avoid.

Every model is optimized for something. Every workflow automation encodes a set of incentives. Every dashboard has an implied customer.

If the primary customer is the health system, the tool will optimize for system visibility. If the primary customer is the payer, the tool will optimize for utilization control. If the primary customer is the physician and the patient, the tool can be designed to protect reasoning, presence, and care quality.

That is why ownership matters.

The danger is not that AI becomes intelligent enough to replace physicians. The danger is that AI becomes operational enough to manage physicians on behalf of institutions that were already over-optimized for compliance, billing, and surveillance.

George Jetson was surrounded by powerful machines. None of them gave him leverage over Mr. Spacely.

That is exactly how clinicians should think about vendor-controlled AI.

3. Information Abundance Creates Attention Scarcity

In a high-AI environment, the scarce resource will not be information.

It will be attention.

A physician can only review so many autogenerated drafts, alerts, suggestions, confidence scores, inbox messages, and decision prompts before the cognitive overhead becomes its own pathology. This is one reason I keep coming back to the argument that clinical documentation automation should remove friction, not replace the doctor.

The best clinical systems do not just generate more. They reduce the number of places where the physician has to spend scarce attention.

If an AI system multiplies documentation artifacts, multiplies review burden, and multiplies oversight without protecting presence, it has not saved the clinician. It has simply moved the work into a different interface.

4. Equity Does Not Arrive Just Because the Model Scales

This is where the rhetoric gets especially dangerous.

People talk about AI in healthcare as if scale automatically produces fairness. It does not.

Models trained in data-rich academic centers do not automatically generalize to rural hospitals, under-resourced clinics, safety-net systems, or populations historically excluded from high-quality datasets. In maternal health, that matters immediately. The burden of harm is not evenly distributed, and model failure will not be evenly distributed either.

If a clinical AI system performs worst in the very communities already carrying the highest burden of delayed diagnosis, fragmented care, and preventable mortality, then scale has not reduced inequity. It has industrialized it.

Abundance for the already-advantaged is not post-scarcity medicine.

It is just better software for the people who already had access.

5. Faster Communication Can Still Hollow Out Trust

Trust is not a soft extra in medicine. It is infrastructure.

Patients disclose more when they trust you. They return sooner. They tell you when they did not take the medication. They tell you when the diagnosis does not fit the reality of their life. That information changes care.

Now layer AI across the encounter: AI-generated intake summaries, AI-drafted portal replies, AI-produced after-visit summaries, AI-assisted counseling prompts. Every individual layer looks reasonable. Collectively, they can create a clinical experience that is more efficient and less human.

That is the risk.

Not bad grammar. Not hallucinated discharge instructions. Those matter too. But the deeper risk is a clinical relationship that becomes informationally dense and relationally thin.

Medicine cannot afford that trade.

6. Automation Can Quietly Deskill the Physician

This is the slow failure mode, which is usually the most dangerous one.

If trainees start outsourcing first-pass reasoning before they have built durable internal models, they do not become augmented. They become dependent.

There is a real difference between using AI to challenge your thinking and using AI to substitute for thinking you never fully built. One strengthens judgment. The other erodes it.

That matters in every field. It matters even more in medicine, where the moment the model is wrong is often the exact moment the physician has to be most independently capable.

This is why I am skeptical of any AI training story that focuses only on access and speed. The question is not whether the learner gets an answer faster. The question is what kind of clinician the system is shaping over five years.

Forster saw this problem early. Once the machine mediates every layer of reality, people stop knowing how to generate knowledge without it.

Medicine should take that warning seriously.

The Builder’s Question

So what is the alternative?

Not rejection.

Not nostalgia.

And certainly not passive consumption.

The answer is physician-builders.

The physician-builder is not valuable because physicians happen to know how to prompt a model or call an API. The physician-builder is valuable because medicine needs people who can see the objective function, the workflow, the moral stakes, and the failure modes at the same time.

That is a rare combination. It matters more now than ever.

I have made this argument before in a slightly different form: doctors who code should build systems, not just models. The same principle applies here.

Clinical AI should not be evaluated by how impressive the demo looks. It should be evaluated by who it serves, what it protects, where it fails, and whether the clinician still has agency when it matters.

What Physician-Builders Should Do Now

Own the Objective Function

If you do not know what the tool is optimizing for, you do not know what it will eventually do to your workflow.

A physician does not need to build every product from scratch. But we do need enough technical literacy to interrogate the architecture: what is being predicted, what is being maximized, which errors are tolerated, which populations were represented, and which stakeholder is treated as the real customer.

Those are clinical questions now.

Build for the Places the System Is Most Likely to Fail

Do not validate only where the data are clean.

Validate where the workflow is messy. Validate where follow-up is inconsistent. Validate where resources are constrained. Validate where language, geography, race, and insurance status make care more fragile.

If a model cannot hold up there, then the story we are telling about democratization is mostly branding.

Protect the Irreducibly Human Layer

Some parts of medicine should be accelerated. Some parts should be defended.

I want AI help in summarization, structured extraction, prior authorization support, and repetitive workflow assembly. I do not want the field to forget that certain moments in care require uncompressed human attention.

That line has to be drawn deliberately. Otherwise efficiency culture will draw it for us.

Teach Interrogation, Not Just Adoption

The next generation of physicians should learn how to question models, not just use them.

What population was this validated on? What is the false negative rate in the subgroup I am treating? What assumptions are embedded in the risk score? What happens when the output conflicts with the bedside reality?

That is not computer science trivia.

That is clinical literacy in the age of AI.

The Future Worth Building

The Jetsons got one thing right: the machines are coming.

What they got wrong was the politics of abundance.

That is the part medicine still has time to change.

Artificial intelligence in clinical medicine can absolutely make care more responsive, more informed, and less administratively punishing. I believe that strongly enough to keep building toward it. But none of those gains arrive by default. They have to be designed, governed, validated, and defended.

If we let market incentives alone define the future, we will get abundant outputs attached to the same old structures of control. More surveillance dressed up as support. More optimization for the institution than for the patient. More clerical speed without more clinical freedom.

That is the Jetsons future.

I am not interested in that future.

I want physician-built systems that return time to care, preserve independent judgment, widen access without widening harm, and keep the human relationship at the center of medicine.

That future is still available.

But it will not be delivered to us.

We have to build it.

Related Reading

- Clinical Documentation Automation Should Remove Friction, Not Replace the Doctor

- Doctors Who Code: Build Systems, Not Just Models

- How I Used Code and AI to Fix One of Healthcare’s Most Painful Bottlenecks: Prior Authorizations

- The Limits of Viability

Chukwuma Onyeije, MD, FACOG is a Maternal-Fetal Medicine specialist and Medical Director at Atlanta Perinatal Associates in Atlanta, Georgia. He is the founder of CodeCraftMD and OpenMFM.org and writes about clinical AI, physician-developer practice, and software architecture in medicine at DoctorsWhoCode.blog.

Related Posts